Anthropic Measured What AI Is Actually Doing to Jobs. Here Is What the Data Says.

The research is real, the methodology is honest, and the gap between what the CEO says and what the economists found is the story nobody is writing.

CONTENTS

What Anthropic’s Jobs Research Actually Says

What they actually built

The O-ring problem nobody wrote about

The finding that got the least coverage

How subjective is this research?

The Amodei problem

Why publishing this matters beyond the findings

What Anthropic’s Jobs Research Actually Says

Most coverage missed the three things that matter.

Anthropic published labor market research on 5 March 2026. It went viral, got summarised badly in most places, and the three findings that actually matter were either buried or ignored entirely.

The paper is here: anthropic.com/research/labor-market-impacts. The authors are Maxim Massenkoff and Peter McCrory. Read it before drawing conclusions, because the headline most people ran with AI has had almost no impact on jobs is technically accurate and substantially misleading.

What they actually built

Most research on AI and jobs starts with a question: could a language model theoretically do this task? The answer, across most white-collar work, is yes. That produces alarming charts and gets cited in think pieces. It also tells you almost nothing about what is happening right now.

Massenkoff and McCrory asked a different question. They took the O*NET database, which maps around 800 US occupations to their constituent tasks, crossed it with existing research on theoretical LLM capability, and then filtered both through actual anonymised Claude usage data from the Anthropic Economic Index. The result is a new metric they call observed exposure. Not what AI could do to your job. What it is doing.

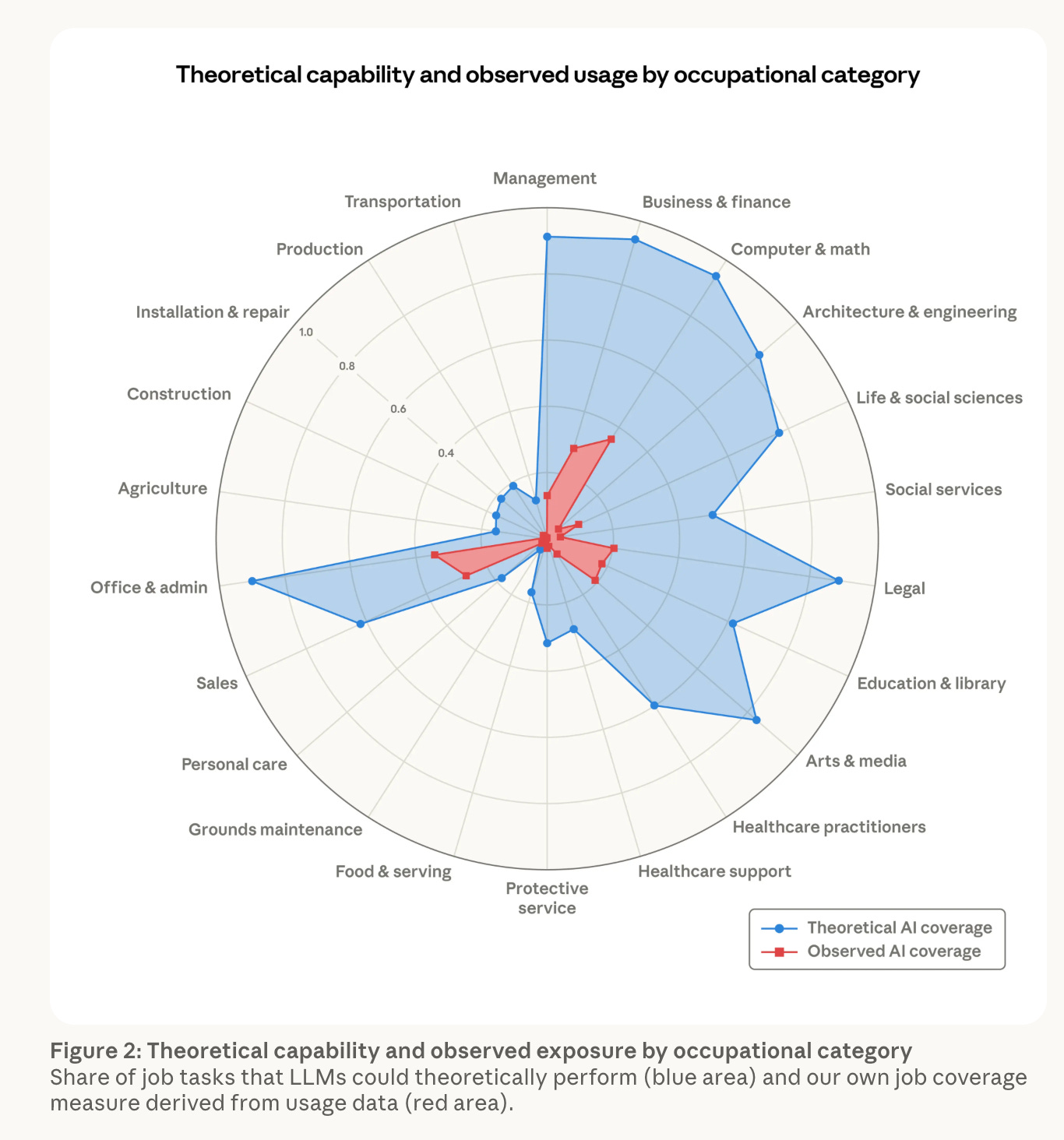

The gap between those two things is large and consistent across every white-collar category. Computer and math: 94% theoretical, 33% actual. Office and admin: 90% theoretical, 38% actual. Legal: 88% theoretical, 20% actual. Business and finance: 94% theoretical, 28% actual.

This is the chart that went around on LinkedIn and X, mostly shared as reassurance. The framing was: AI is nowhere near doing what it is theoretically capable of. The subtext was: relax.

That is the wrong reading. The gap is not a comfort and smiles. It is a trajectory.

The O-ring problem nobody wrote about

The paper cites a 2025 working paper by economists Gans and Goldfarb on what they call the O-ring model of jobs. The original O-ring theory, from economist Michael Kremer in 1993, argued that in production processes where all tasks need to be completed to a high standard, the weakest link determines the output. One failed component brings down the whole system.

Applied to AI and employment, the implication is specific and important. If jobs work like O-rings, then employment effects may only become visible when AI reaches all of the tasks in a role, not just some of them. A lawyer whose job involves drafting contracts, advising clients, appearing in court, and managing relationships is not at risk when AI can handle the drafting. The system holds until coverage is high enough across all components.

33% task coverage in computer and math does not mean 33% of that workforce is currently at risk. It may mean the signal will not appear in unemployment data until coverage crosses a threshold that makes the remaining human tasks structurally unviable. At which point the effect will look sudden even though it was not.

The authors are explicit that their framework is most useful when effects are ambiguous. The current absence of a mass unemployment signal is not evidence that nothing is happening. It is evidence that the methodology is working as designed, establishing a baseline before the numbers become undeniable.

The finding that got the least coverage

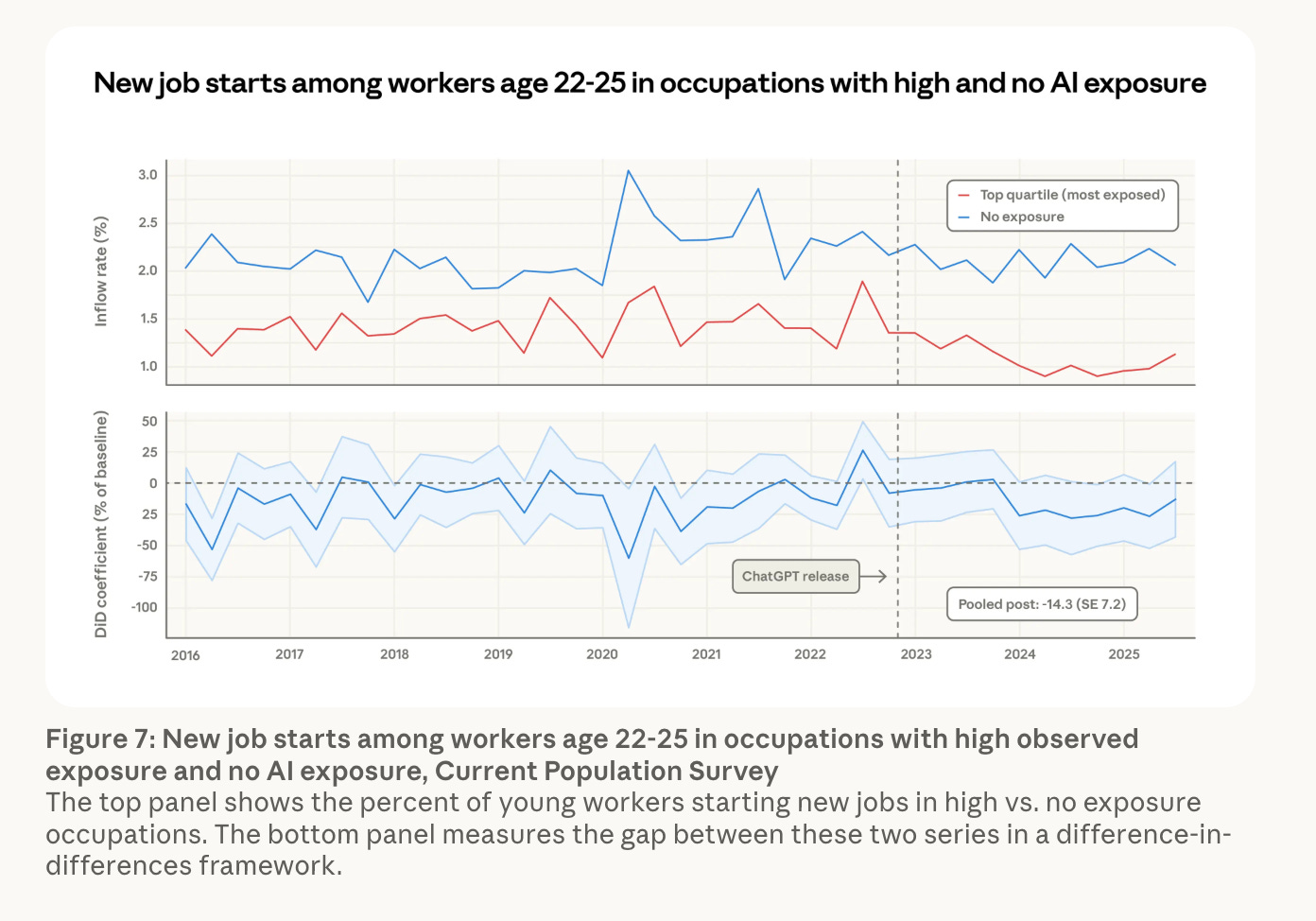

Figure 7 of the paper tracks monthly job-finding rates for workers aged 22 to 25 entering high-exposure versus low-exposure occupations. Worth noting: Anthropic corrected Figure 7 on 8 March, three days after publication, because the labels on the two series had been reversed. The underlying finding did not change.

The series diverge visibly from 2024. Job-finding rates in low-exposure occupations hold steady at around 2% per month. Entry into high-exposure roles falls by roughly half a percentage point. The averaged estimate across the post-ChatGPT period is a 14% drop in job-finding rates in exposed occupations compared to 2022. The authors describe this as just barely statistically significant. There is no equivalent signal for workers over 25.

What this means in plain terms: nobody is getting fired. The entry point is narrowing. Senior staff are staying. The work that would previously have gone to junior employees is being absorbed without those positions being filled. Many of the young workers who do not get hired simply exit the labour market rather than register as unemployed, which is why aggregate unemployment data does not catch this.

The paper also shows that the workers most exposed are more likely to be female, more educated, and higher paid. Women are 16 percentage points more likely to be in the most exposed quartile than in the zero-exposure group. Workers with graduate degrees make up 17.4% of the most exposed group, compared to 4.5% of the unexposed group. For every 10 percentage point increase in observed exposure, BLS projected job growth through 2034 falls by 0.6 percentage points. That correlation does not exist when using theoretical exposure measures alone. Only the real usage data produces it.

This is not the automation story most people pictured. The narrative was blue-collar work first, physical labour, manufacturing, delivery drivers. What the data shows is that the first visible effects are concentrated in screen-based, text-heavy, credential-intensive work, and they are showing up at the point of entry, not in the existing workforce.