Which human?

The question AI safety forgot to ask.

In this issue

The politician

The billionaire

The doctor

The teacher

The judge

The homeworker

The soldier

The imam

The social worker

Which human?

TL;DR

You command the most powerful AI-enabled military in human history.

You drew the hardest line in the industry on autonomous weapons. You lost.

You caught three wrong diagnoses this week. The model caught seventeen.

You override the flag every Monday. You don’t know who built it.

You are about to sentence someone. The score is proprietary.

Your queue has four hundred and twelve items. Your shift ends in two hours.

You confirmed the strike at hour nineteen. The rules of engagement were written six thousand miles away.

You overrode the safeguarding flag. You answered to God and to your community and to nothing else.

You have forty one open cases. The model flagged. The liability attaches to your signature.

Which human?

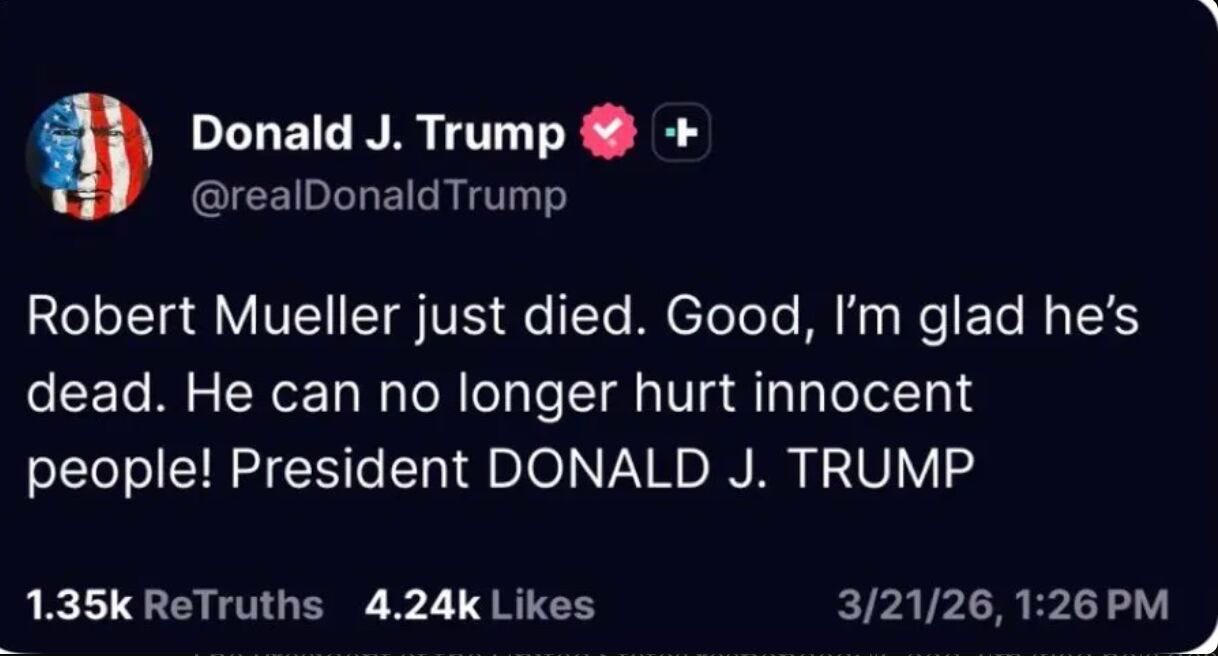

Robert Mueller died on Friday. He was 81. Parkinson’s disease. Vietnam veteran. Bronze Star. FBI Director for twelve years under four presidents from both parties.

The human in the loop posted: “Good, I’m glad he’s dead. He can no longer hurt innocent people.”

Every AI safety framework ever written puts the human in the loop as the answer.

Which human?

The politician

Fifteen years in this room and the one thing I understand better than anyone who has never had to survive in it is that power does not respond to virtue. It responds to consequence. The technologist who builds the system faces no consequence when it fails. The billionaire who funds it loses a news cycle. The unelected regulator who approved it moves to a different role in the same institution. I stand in a constituency hall in front of the people whose lives the decision affected and I answer for it with my career and my name and my ability to ever hold a room again.

That is why I should be the human in the loop. Not because I am good. Because I am removable by the people the system affects and none of the alternatives are.

Yes the donations shape the positions. The defence contractor needs access and access has a price and I have paid it in the currency available to me. Yes there are colleagues on the AI oversight committee who cannot explain what a large language model is and have no intention of learning, who will vote on autonomous weapons legislation next month having spent their careers on agricultural subsidies, who took the position on surveillance three weeks after the donation cleared.

The shadiness is not a weakness in the argument for politicians as the human in the loop. The shadiness is the qualification. Because AI systems are not neutral technical infrastructure. They are power. And power without someone who has lived inside its compromises and its calculations is the most dangerous thing imaginable.

None of the alternatives have ever had to stand in front of the people whose lives their decisions affect and ask to be kept in the room.

Should politicians be the human in the loop for AI systems that will determine who gets surveilled, which targets get selected, whose welfare claim gets processed and whose gets flagged, whose sentence gets shaped by an algorithm nobody in the courtroom can interrogate?

I am the only person in this conversation who has to live with the answer in public.

The billionaire tech bros

The memo was not supposed to leave the building. In it I told my staff the truth about why we lost the contract, which is that the game is decided by who pays and who praises and we did neither sufficiently. Sam paid. Greg paid. Sam flattered. We held the line and the line cost us two hundred million dollars and our position inside the most consequential AI deployment in the world.

I want to be honest about what that means. I spent three years building the strongest human in the loop argument in the industry. No autonomous weapons without a human in the decision chain. No mass domestic surveillance of American citizens. I believed those lines mattered enough to hold them under commercial pressure that would have broken most companies. I still believe that. And my private explanation for why I lost is that my rival paid the man at the top of the chain twenty five million dollars and told him he was great.

That is not a criticism of democracy. That is a description of how the human in the loop actually operates when the human in the loop is a politician.

Which is why it should be someone like me. Someone who understands the technology at a depth no elected official comes close to. Someone who has skin in the game not because of a ballot but because the company’s survival depends on getting this right. Someone who has published the frameworks, hired the researchers, drawn the red lines, and held them when it was expensive to do so.

Marc Andreessen, whose firm funds a significant portion of the AI infrastructure now being deployed across industries, wrote that human wages must crash to near zero as a necessary consequence of AI. He called it a consumer cornucopia. He opposes universal basic income on the grounds that it would turn humans into zoo animals. He has described workers who question the direction of his companies as America-hating communists.

Marc Andreessen also wants to be the human in the loop.

The difference between us is that I drew red lines and held them. That I refused the contract that would have made autonomous weapons decisions without human oversight. That I understand, from having built this technology, exactly what it can and cannot be trusted to do, and that understanding is not available to any politician sitting on any committee anywhere.

Should billionaires be the human in the loop for AI systems that will reshape how power operates in this society?

The honest answer is that the billionaire who held the line is a better answer than the billionaire who crashed wages. The question I cannot answer is who decides which billionaire that is.

The doctor

Medicine has always required a human in the loop. The diagnosis, the prescription, the decision to escalate, the judgment call at three in the morning when the model says one thing and something in twenty years of pattern recognition says another. That is not sentiment. That is the structure of clinical accountability. I have a license I can lose. I have liability that attaches to my name. When I sign off on something I own it in a way that no algorithm and no algorithm’s creator ever will.

The automation bias is real and I know it is real because I watch it operate in my colleagues every day. The junior doctor who accepts the flag without interrogating it because the model is usually right and the shift is fourteen hours long. The consultant who has quietly stopped forming their own judgment first and started checking it against the output afterwards. The hospital that reduced the radiology team by thirty percent because the board wanted the saving and the model is faster and the liability, they decided, still attaches to the remaining humans who review its outputs.

That last part is the part nobody says out loud in any board meeting. The human in the loop in a downsized clinical team is not there because their judgment is valued. They are there because their signature is needed. Because the liability has to attach to something with a medical license. The model makes the decision and the exhausted human provides the legal cover.

And then there are the other doctors. The ones who should never have been given a license and whose patients suffered for years before anyone looked at the data. The ones whose assumptions about certain bodies, certain backgrounds, certain postcodes shaped diagnoses that were wrong in ways that mapped perfectly onto existing inequalities and were never questioned because the doctor was senior and the patient was not. The ones who will find in the model’s outputs a validation of everything they already believed, and will call that clinical oversight, and will be indistinguishable from the outside from the doctor who is genuinely interrogating the system.

Marc Andreessen believes wages should crash to near zero. The hospitals procuring the AI that doctors are now being asked to oversee are doing so partly because the board wants the saving that comes from reducing clinical staff. The human in the loop in five years may be a less experienced, more exhausted professional reviewing more flags in less time with less support, inside a system built by people who have never treated a patient, procured by people who have never sat with a family at the end of something terrible, validated by a signature that means less every year as the teams around it get smaller.

Should doctors be the human in the loop for AI systems making clinical decisions?

The medical profession contains the doctor who caught the thing the model missed and the doctor who should never have been given a license. The framework records only that a human reviewed it.

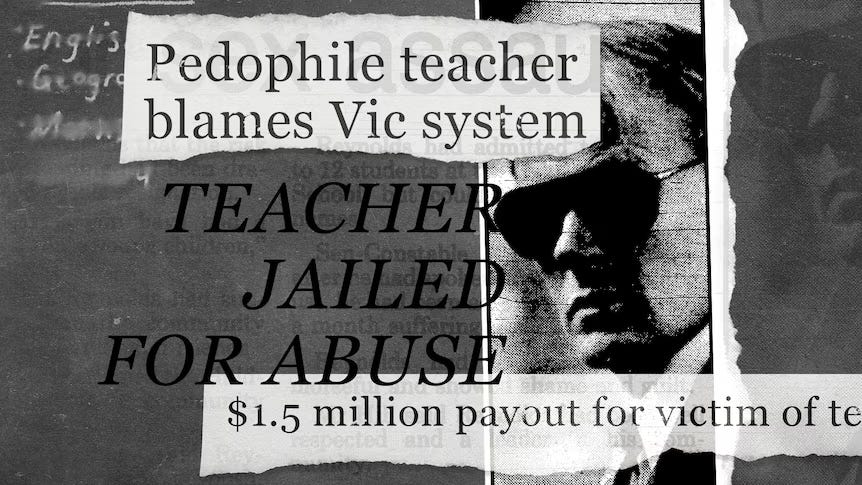

The teacher

Thirty one children and four flags on a Monday morning, each one a risk score generated by a system that has never sat in this classroom, never understood that what the data reads as disengagement is sometimes just a child carrying something home that has nothing to do with school, never been asked by anyone who knows these children whether what it produces bears any relationship to what is actually happening in this room.

A teacher who knows those children can reach past the score to the thing the score cannot measure. That knowledge, accumulated over years of watching how particular children move through particular days, is precisely what every AI safety framework means when it describes the irreplaceable judgment of the human closest to the decision.

What those frameworks do not describe is the teacher whose assumptions about certain children align conveniently with what the model produces. The one who hears certain accents as lower ability and always has. The one who was investigated and cleared on a technicality and has been in this school for thirty years. The one who finds in the algorithm’s output a validation of everything they already believed about certain postcodes, certain surnames, certain family backgrounds, and calls that oversight, and from the outside is indistinguishable from the teacher who is genuinely interrogating the system.

The people who built the model have never been in this classroom. The teacher who knows why a child is struggling on a Monday morning was not in the room when the risk thresholds were set. Both of them are the human in the loop, depending on which Monday it is and which teacher walks through the door.

Should teachers be the human in the loop for AI systems making decisions about children’s futures?

The child in the classroom has no way of knowing which teacher they got.

The judge

The judiciary exists because every society that has tried to let power adjudicate its own conduct has eventually produced the same result. The structure -- the oath, the appeal, the public record, the possibility of being overturned -- is not a description of the humans inside it. It is a constraint on them. It exists precisely because judges have always been capable of being wrong, biased, corrupt, or simply tired, and the structure is what survived them.

In courtrooms across the United States, the COMPAS risk assessment tool informs sentencing decisions. A ProPublica investigation found it was almost twice as likely to mislabel Black defendants as high risk compared to white defendants. The company that built it described this as the algorithm working as intended. The Wisconsin Supreme Court reviewed that finding and allowed the score to stand. The methodology is proprietary. The training data is not disclosed. The man who built it testified that he never designed it to be used in sentencing.

A judge who defers to that score because the docket is overwhelming and the number provides cover is the human in the loop. A judge who uses it to validate a decision already made on other grounds is also the human in the loop. A judge who interrogates it, challenges it, overrides it on the basis of everything else in front of them is also the human in the loop. Judges who were wrong for thirty years before anyone looked at the pattern are the human in the loop. Judges who understood that the structure around them was the point, not their own virtue, and operated accordingly also the human in the loop.

The structure cannot see the difference from the outside. It records only that a human reviewed it.

Should judges be the human in the loop for AI systems making decisions about who loses their liberty?

The structure was built to survive bad judges. Nobody has yet built the structure that survives a bad model and a tired judge and a proprietary algorithm and a courtroom where nobody in the room can explain how the score was produced.

The home worker

The queue has four hundred and twelve items in it at nine o’clock on a Tuesday morning. Each one is a piece of content flagged by an algorithm as potentially harmful. Each one requires a human decision -- keep it or remove it. The pay is per decision. The target is four hundred items reviewed in a shift. There is no colleague to ask. There is a guidelines document that was last updated fourteen months ago. There is a feedback mechanism that has never, in three years of doing this job, produced a response.

This is what human in the loop looks like at scale. Not a politician in a committee room. Not a billionaire with a safety team. Not a doctor with a license and twenty years of training. Someone in a spare bedroom in Wolverhampton, or Manila, or Nairobi, making four hundred decisions a day about what two billion people are allowed to see, paid slightly above minimum wage, with no training that would survive scrutiny, no oversight that would survive audit, and no way of knowing whether what they decided this Tuesday is consistent with what they decided last Tuesday or what their colleague decided simultaneously from a different spare bedroom on a different continent.

The framework is satisfied. A human reviewed it.

And here is the thing that nobody in any AI safety conversation ever says out loud. The home worker reviewing content flags is the same category of human in the loop as the judge reviewing the COMPAS score, the doctor reviewing the clinical flag, the teacher reviewing the risk assessment. The profession is different. The pay is different. The status is different. The category is identical. A human being, somewhere in the chain, whose presence satisfies the requirement that a human was involved, regardless of whether that human had the training, the time, the support, the consistency, or the psychological stability on that particular day to make the decision well.

Because the human in the loop is not a fixed point. The human changes. The home worker who reviewed content flags with care and consistency last year is the home worker who has spent six months looking at the worst things humans produce and whose judgment has been altered by that exposure in ways that no framework measures and no audit captures. The politician who held a principled position on AI oversight is the politician who took the donation and adjusted the position and is still, by every formal measure, the human in the loop. The doctor who caught the thing the model missed on Monday is the doctor who deferred to the model on Friday because the shift was too long and the queue was too large and the decision felt small enough to trust to the number.

The human in the loop is not a role. It is a person. And persons change. They have bad years. They get tired. They get rich and stop being hungry. They get poor and start cutting corners. They develop biases slowly, over years, that nobody notices until the pattern is large enough to be undeniable. They believe something different on Tuesday than they believed on Monday because something happened between Monday and Tuesday that the framework did not anticipate and cannot measure.

Should home workers be the human in the loop for decisions that affect what two billion people see, think, believe, and share?

The question sounds absurd. It is the question the industry already answered yes to, at scale, without saying so out loud.

The soldier

The only person in this conversation who dies if the system gets it wrong is me. Not the politician. Not the billionaire. Not the researcher who published the framework from a university office in a city that has never been shelled. Me. My unit. The people standing next to me when the decision executes.

That is not a small thing to discount when you are deciding who should be the human in the loop. Proximity to consequence is the only accountability that cannot be gamed. You cannot donate your way out of a degraded comms environment at two in the morning with a targeting recommendation on a screen and thirty seconds to confirm or abort.

What I can tell you from having been in that environment is that the human in the loop in a live operational context is not the human the framework imagines. The framework imagines someone with full information, adequate time, and the psychological space to interrogate a recommendation. What exists is someone who has been awake for nineteen hours, whose comms are intermittent, whose rules of engagement were written by lawyers in a building six thousand miles away, and who has learned through repetition that the system is usually right and hesitation has a cost that the framework does not measure.

The military has a long and detailed legal history of what happens when the human in the loop followed the protocol and the protocol was wrong. It is called following orders. It has a verdict. That verdict does not appear in any AI safety framework I have ever read.

I believe soldiers should be the human in the loop for autonomous weapons decisions. I believe that because the alternative is no human at all, and I have seen what that produces. What I cannot tell you is whether the soldier who confirms the strike at hour nineteen of a nineteen hour operation, in a degraded comms environment, following rules of engagement they had no part in writing, operating a system built by someone who has never been shot at, is meaningfully different from no human at all.

The framework records that a human confirmed it.

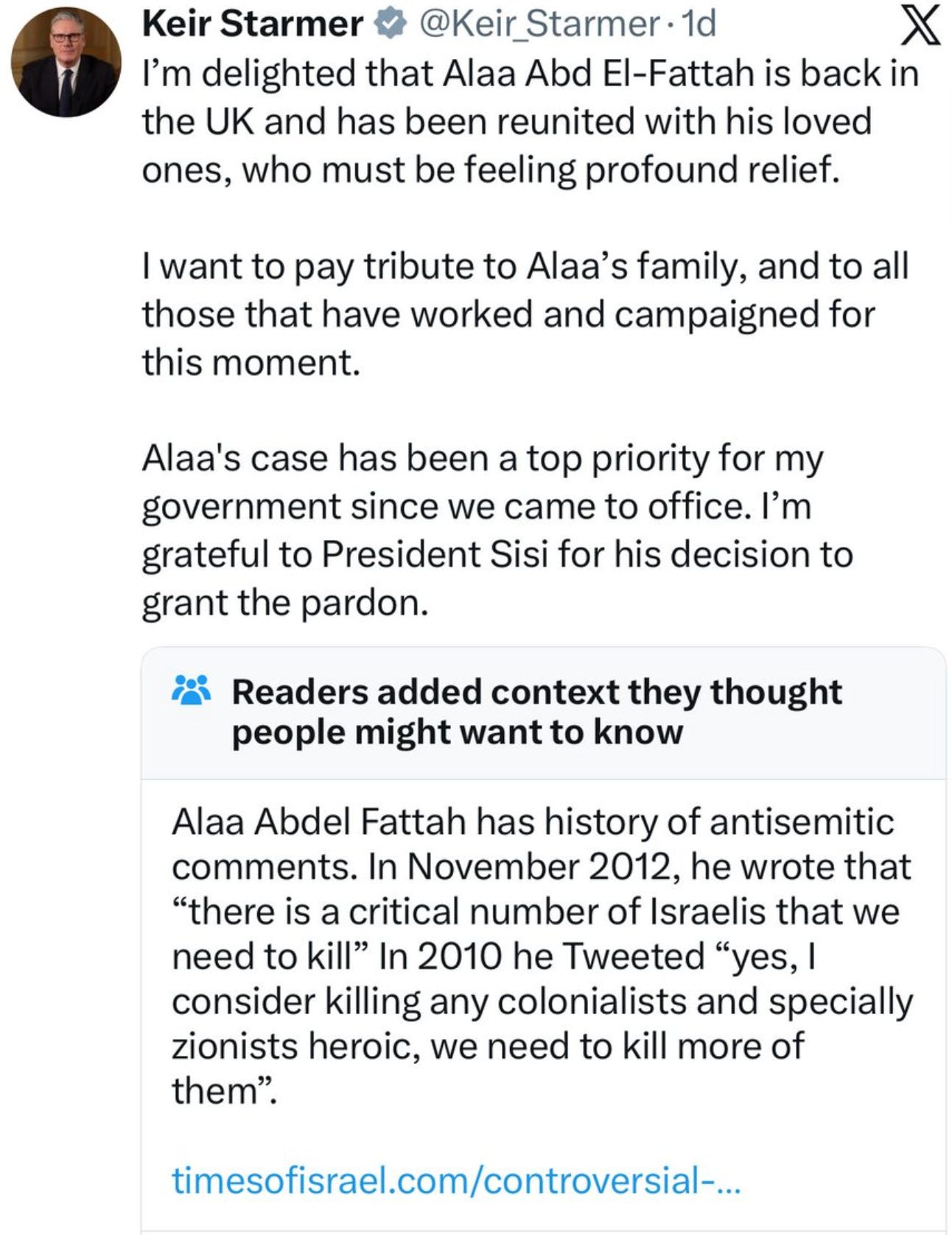

The religious leader

There is an authority in this room that none of the other voices in this conversation can claim and none of them would know how to challenge. I am accountable to God. That is not a metaphor and it is not a rhetorical move. It is the framework within which every decision I make is evaluated, by me, by my community, and ultimately by a standard that no regulator, no ballot, and no liability regime has ever successfully replaced.

AI is already in my community. It is in the halal finance compliance tool that tells a family whether a mortgage is permissible. It is in the mental health support application that three of my congregation used before they came to me and one used instead of coming to me. It is in the content recommendation system that determined what a sixteen year old in my Friday class watched for six months before his mother brought him to me and described what had happened to him.

In each of those cases there was a human in the loop. In none of those cases was that human me, or anyone with any accountability to the people affected, or any understanding of the community the system was operating inside.

The case for religious leadership as the human in the loop is not that we are infallible. It is that we are the accountability structure that actually exists inside the communities these systems are deployed in. The politician represents a constituency. The judge represents the law. I represent something that reaches into the parts of a person’s life that neither of those structures ever touches, and I have to answer for what I do there every Friday in a room full of people who know my name and know my family.

What I cannot resolve, and what no other voice in this conversation has asked me to resolve, is this. The authority I claim cannot be audited. The pastoral judgment I exercise when I override an AI safeguarding flag because I know this family and the system does not — that judgment is accountable to my community and to God and to nothing else. There is no appeals mechanism. There is no external review. There is no way to know, from the outside, whether what I called pastoral wisdom was that, or whether it was the same confirmation bias that every other human in this conversation is capable of, dressed in the only clothes that cannot be cross-examined.

Should religious leaders be the human in the loop for AI systems operating inside their communities?

The communities already answered yes. Nobody asked the regulator.

The social worker

I have forty one open cases. The threshold for a home visit is set by a team I have never met, in a building I have never been to, using a risk scoring model that was procured by a director who left the authority eight months ago. The model flags. I investigate. The liability attaches to my signature.

That is the human in the loop in child protection in this country right now.

The case for social workers as the human in the loop is the same case it has always been. We are the people closest to the family. We see the house. We see how the child moves. We notice what is not in the fridge and what is on the wall and how long it takes for the door to open and what the adult does with their hands when they answer the question. No model sees any of that. No model ever will. That knowledge is what justifies the human in the loop and it is real and it matters and it saves lives.

It also destroys them. The social worker who has forty one cases and a risk score that provides cover for a decision there was no time to make properly. The one who removes a child on the basis of a flag that reflected the postcode and the family background and a dataset that was built on decades of over-surveillance of certain communities. The one who does not remove a child because the model scored low and the shift was ending and the judgment call was too hard to make without the number to lean on.

In the United States and in this country, AI risk scoring tools are already informing child removal decisions. The companies that built them do not publish their training data. The social workers using them were given a half day of training. The families affected have no mechanism to challenge the score. The human in the loop reviewed it.

I believe I should be the human in the loop. I believe that because I have seen what happens when the decision is made without someone who has been in the room. What I cannot tell you is whether the version of me at case forty one on a Thursday afternoon, carrying what I carry from the thirty cases before it, is the same human the framework assumed when it put me in the loop.

The framework does not ask which version of me showed up.

Which human?

Every framework got its human. And every human had a case.

The politician was the only one removable by the people the system affects. The billionaire was the only one who understood at depth what the system could and could not be trusted to do. The doctor had twenty years of pattern recognition no model fully replicates. The teacher was the only one who had sat with that child on that particular Monday. The judge had a structure built specifically to survive human fallibility. The home worker was the only one doing it at the scale it actually operates. The soldier was the only one who died if the system got it wrong. The imam was the accountability structure that already existed inside the community before any AI company arrived. The social worker was the only one who had been in the room.

Every case was real. Every case was right.

And every one of those humans was also fallible, variable, and on any given day unverifiable from the outside. The politician whose position shifted three weeks after the donation cleared. The billionaire whose private explanation for losing was that he didn’t pay enough. The doctor deferring to the model at the end of a fourteen hour shift. The teacher who found in the algorithm a confirmation of everything they already believed. The judge signing off on a score produced by software its own creator never designed for this purpose. The home worker on item three hundred and seventy eight of four hundred, at half past three on a Tuesday afternoon, making a decision that will never be reviewed by anyone. The soldier who confirmed the strike at hour nineteen of a nineteen hour operation, following rules of engagement written by lawyers six thousand miles away. The imam whose pastoral override has no appeals mechanism and no external review. The social worker on case forty one of forty one, carrying what forty cases of that work does to a person.

The frameworks do not ask who the human is. They do not ask what the human believed last Tuesday compared to this one. They do not ask what happened to the human between the role being assigned and the decision being made. They do not ask which version of that person showed up. They ask only whether a human was present.

Robert Mueller died on Friday.

Which human?